AI chatbots know how to code. To them, Python, JavaScript, and SQL are just languages, and there are examples for them to train on absolutely everywhere. Some programmers have even taking to “vibe coding”, letting AI do some work as though it’s a junior programmer, just by describing what they want to build. But can regular people do that?

Last month the business editor asked me to have a go at vibe coding. He wanted a tool to take every name on recent AFR Rich Lists, and every company on the ASX 200, and monitor online court listings for hits.

This would be an experiment, to test whether someone without coding experience could use AI to code, as opposed to a plan to change our work processes.

This seemed a heavy project to me (hundreds of names, hundreds of courts, could be in the tens of thousands of searches per scan), but then I know very little about courts, or coding.

Day 1 and 2

I wanted to evaluate the idea that coding a tool like this could be achieved by anybody, so I started by talking to Anthropic’s Claude chatbot in a web browser, using a free account. I described the project in about 30 words. Claude thought for a bit, praised my ingenuity in conceiving such a tool (it does this anytime you say anything), and delivered “an honest overview of what’s feasible”.

It laid out what data is available online and the approaches you can take to scrape it, and suggested an email list for where to send results. In retrospect, most assumptions turned out to be incorrect, and far too limited in scope, but at the time seemed promising. Was trawling the sites this way against the law or outside common courtesy? It didn’t think so.

Claude proposed to write Python scripts, which my Mac could execute. It made a readme file explaining how it worked, and a config file to hold the names and email details. Helpfully, Claude had laid out the readme in sequential steps for me. And there were only three!

1: Install dependencies. This ground the process to a halt right away, as the readme pointed to a file called “requirements”, which I did not have. I asked Claude, which apologised and generated the file. I executed it, and watched with some unease as it downloaded nine random Python libraries onto my machine. Since I’m not a coder, I don’t even have an idea of the potential harms. (I checked later; all were popular, trusted and open-source.)

2: Edit config. I had to go back and forth with Claude to understand how I could get the tool to send a mass email using my Gmail address. Long story short, it was an extremely tedious process and, once again, of uncertain security. To test the tool, I added a name to the list that I knew had been in court records recently.

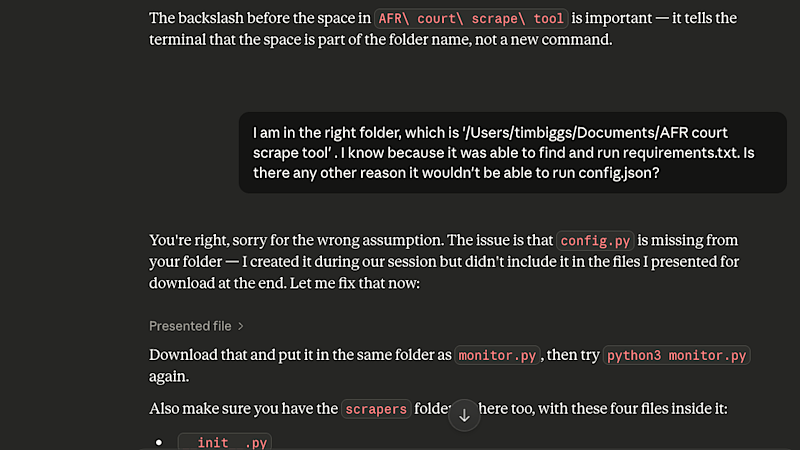

3: Run. At the moment of truth, the tool did nothing but surface two errors. Claude told me that one of the errors could be ignored as my Mac was just “being fussy”, and the other error meant I had pointed the terminal – the Mac’s command line interface – to the wrong folder. I countered that this wasn’t possible, since it had run the script in the correct folder. At which point Claude realised there was another file it had forgotten to send me.

I continued to run the script and send the error logs to Claude, until it had sent me three additional files, told me the scraper scripts needed to be in a separate folder, remembered another two files, and rewritten the main script several times. I was getting a bit annoyed at Claude by this point. It was cheerfully accepting each failure and confidently stating the problem to be fixed, but was it just making it up? It was now telling me that my Mac was running Python 3.9, when the tool expected at least Python 3.1. I didn’t bother pointing out how inane that was.

I asked for a full map of what the file structure should look like, and pointed out several errors including a completely made-up file extension. The scrapers finally executed, but all failed to connect. Claude claimed that the URLs for the services had changed since it wrote the scripts, which seemed unlikely as it had only been an hour.

Randomly, Claude asked me to plug a URL into my browser and paste the results so it could read them. It turns out it had just guessed the parameters when building the tool, but now the URL had provided reliable ones. Why not ask me to do this in the first place instead of guessing? It had become clear that this version of Claude had limited capability to search the internet, but seemed determined to pretend it was great at it.

By the end of the first day I had a tool that successfully checked several online court records against my one test name, and sent an email summary. But there were still some big issues, which I tackled on day two:

- Claude would not provide a list of names from recent rich lists, even if I supplied all the URLs. It told me to sign up for an AFR subscription, and didn’t care that I already had one. I had to manually pull all the names, but Claude helpfully sorted them into JSON format.

- At my prompting, Claude had set the tool to run at 7am each day and keep a log of results so that each only appeared in the email the first time it was found. But this meant my computer needed to be online every day, or nobody would get the info.

- I asked it for scrapers that look at coming hearings, which meant troubleshooting each one.

- The email approach didn’t work for the editor’s purposes; he wanted a cloud-based app that anyone could log into to search all the results. So by the end of day two, I’d planned a different approach.

Day 2

I had been using the terminal to run commands, and then reporting the results to Claude, which prepared updated files for me to download, so I could run them again. But with a $17-per-month subscription, Claude Code could essentially live inside the terminal, doing all the testing and tweaking and executing on its own.

I installed it and gave it access to the files I had from day one. Of course the new Claude had no memory of the project, so I asked web Claude to prepare a prompt I could share with its colleague to bring it up to speed. After that, the majority of day two was Claude working away in the background, and me checking in occasionally to approve changes and make requests.

Once the tool was working, I asked about how to turn it into a web app. Claude suggested an AI-focused hosting platform called Railway. My storage needs appeared to be within its free limits. I would need to open a Github account, upload the files to a new repository, then configure Railway to ingest from there. I described how I wanted the site to look and work, and Claude made it. Once that was done, I configured Claude and Github so that I could ask for changes in the terminal, and they would appear on the live web app in a matter of minutes. For example, I added a login page for security, and redesigned the config page to warn people against changing certain settings.

Email was again a problem. The internet has an issue with people using AI tools to automate email sends from authentic-looking addresses, for some reason. Railway blocked all the ports Gmail uses, so I had to find a http-based service with a free API. I found one, and it works (after you look in the spam folder).

Next, I asked Claude to re-evaluate scraper approaches for all states, given the current solution heavily favoured New South Wales. The tool now scraped 10 sources (covering many courts) for 650 names, once daily. I argued with Claude over its ridiculous decision to check the next day’s records instead of today’s, which only gave results if checked after 3.30pm. I asked repeatedly for local times, not UTC. I requested that each entry come with a link to the relevant record.

By the end of the second day the tool was more or less done. It scans every day at 7am, taking two hours, sends an email summary to everyone who’s subscribed, and stores all results in a web tool you can search by name or source. As of writing it’s been chugging away for more than a week, and has stored 3564 results.

What all of this means

Did I build a web tool with no coding experience? Yes. Though I’m not sure I’d say “anyone” could do it; there was a large amount of tech literacy required. And I have some concerns about the process.

First, you still need a good idea. I’m not sure the concept or methodology were especially sound here, since the vibe we were going for was “notify us if a prominent businessperson appears in court”, but what we got was a huge amount of data. Many people have the same names, and many prominent businesspeople show up in court records for uninteresting reasons. All in all, AI built us a tool, but not one we’ll start relying on, by any stretch.

I suspect the internet will become full of abandoned rough-sketch projects like these.

Second, one of the biggest issues with every chatbot is that it instils a false sense of confidence in its responses. If you’re asking about a subject you know, you’ll spot errors right away. Otherwise, it will sound good, but you might find the errors later in the worst way. The tools are fluent in coding the same way they’re fluent in English. They put together phrases that work, which might impress if you don’t know the subject. An expert might look at my project and identify security flaws, inefficiencies, misleading answers, or instances where it appears to be doing something it doesn’t.

AI companies are keen to push chatbots as a PhD-level expert in every field. Just ask your question, and get instant answers or results. But often it feels as if it’s an excellent actor, who knows what a PhD-level expert in a given field would say. I’m not sure coding is much different, it’s just in a different language.

Get news and reviews on technology, gadgets and gaming in our Technology newsletter every Friday. Sign up here.