I had been using AI very occasionally for a while – one of the free versions. I hated using it – found it fairly useless. Then, recently, persuaded by friends, I buckled and paid for a pro version.

This was definitely better. On some subjects, it provided detailed, worked-through information, clearly presented. I know nothing about gardens, but when my son found something in a flower pot and Google couldn’t tell me what it was I fed a description into AI. Immediately, the right answer.

Shortly after that, I found myself unable to remember the name of a band I had listened to recently. I only knew a song or two, vaguely. I did, though, have some good details, which I fed into AI: where they were from, how many people were in the band, the type of name they had. The AI failed.

So I tried again on Google – and this time got an answer. Then I went back to AI, gave it the name (Haute & Freddy) and asked why it had not been able to find it. It told me this was natural for an obscure band without a large internet presence. Which would have been a good response – except the band had been covered in the press, including Rolling Stone. I told AI this. It conceded the point. Then it explained that, in fact, the problem had been with my description of the music, which had narrowed its inquiries and made it impossible to find the answer.

This made sense. And it was interesting, too, because I feel this is the point at which a human would have asked the right question: are you sure you’re describing the music correctly? And that is because a human would have sensed the type of mistake another human would be likely to make, or the ways in which communication might have broken down, knowing that music, in all its intangibility, is a particularly difficult thing to describe.

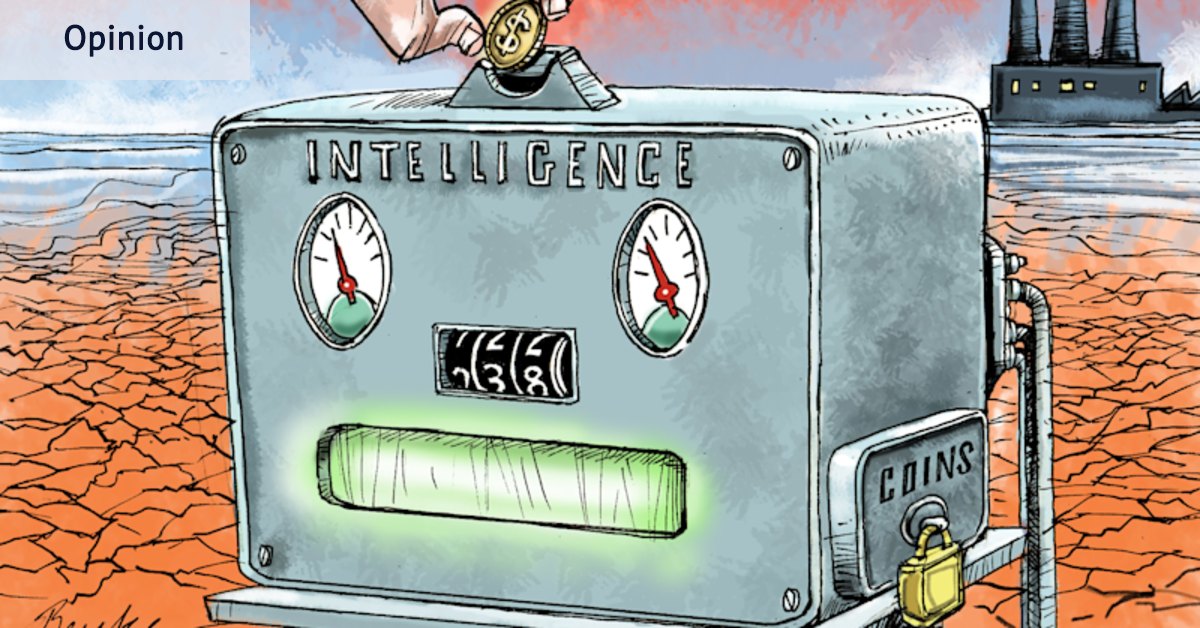

All this brought to mind a recent comic strip by Aram J. French. “We invented a robot that answers questions”, one man says. “We just have to feed it 10 baby giraffes a day.” His companion responds: “But it answers the questions correctly?” The first man: “Oh my goodness no. No no no no no”.

All this is just one person’s anecdotal experience, so I don’t offer it as a sign that AI lacks a bright future – I tend to think the opposite. I also realise that casual users are not really where the gains lie right now: it is people who use AI every day who know how to make the most of it.

Still, I am struck by the absurdity of this moment, partially captured in that cartoon. At the point at which we know we are entering – have entered already – a time of climate crisis, we have found a way to use even more energy.

Another absurdity: as governments (including our own) finally, belatedly turn their attention to the political consequences of lower job security and widening inequality, we have decided this is the moment to embrace a new technology that is already wiping out thousands of jobs.

Or this: at a point in history in which the dominance of oligopolies, in the tech sector particularly, has begun to worry people, we may be about to create another caste of multibillionaires with even more control over our lives.

Which is more or less what Sam Altman, head of perhaps the world’s most powerful AI company, OpenAI, admitted last week. “We see a future where intelligence is a utility, like electricity or water, and people buy it from us on a meter.”

To be fair, in a sense this is already what happens – as when I had to pay to use an AI model I actually found useful. But at this point, that is still a luxury, something I don’t really need. Altman is talking about a world in which AI has become essential, like electricity and water.

I should add that Altman speaks about all this as happening in a world in which AI, because it is so abundant, is very cheap. But as critics quickly pointed out (and Altman half-conceded), his comparison with electricity should point us towards the fact this never really happens. On any product, there is always some limit. This results in price pressures. And there is always pressure applied by those already invested – coal miners or gas companies or perhaps OpenAI – to make sure things are sold in a particular way that will maximise their profits.

The other thing in the electricity comparison that should make us pause is the fact that, eventually, we discovered electricity had “externalities” in the form of climate change – costs that weren’t captured by the market and reflected in the retail price. A price, in other words, that we all had to pay. Some argue the social and political disruption wrought by the internet age should be seen the same way. As it happens, these externalities have compounded: the political disruptions of social media are making it more difficult to deal with the climate problems caused by another great technological leap forward.

Remarkably, AI seems likely to add to both those costs: social and environmental. But instead of confronting this, we are once again engaging in delusional optimism, putting the potential costs out of our mind in the hope or belief that nobody will ever have to pay the bill. More likely, those debts will be due when most of us are old or no longer here.

I remain optimistic about many uses to which AI will be put. And despite my frustrations in trying to find that band, I don’t think it is a huge problem that technology has not yet figured out how to ask us precisely the right questions. I am more worried by the sense we have still not learned to ask the right questions of technology.

Sean Kelly is a regular columnist and a former adviser to Labor prime ministers Julia Gillard and Kevin Rudd.

The Opinion newsletter is a weekly wrap of views that will challenge, champion and inform your own. Sign up here.